The environment, however, is populated only when evaluating a lambda, which will insert the values v, g above in the environment. This ensures that no unevaluated thunks are fed to a function, unless they are already in the environment. To get an eager (CBV) semantics, we can force the argument before the call: interpret env (App e1 e2) = case interpret env e1 of (As luqui states, operationally GHC would reduce this in a call-by-need fashion). Since Haskell is lazy, the code interpret env (App e1 e2) = case interpret env e1 of Of course eager corresponds to CBV, and lazy to CBN. In a denotational-style interpreter like the posted one, I'd speak of eager vs lazy semantics, instead of CBV/CBN. In an operational-style interpreter which reduces terms representations, you can properly speak of CBV/CBN. related to the choice of redex in lambda term reduction.

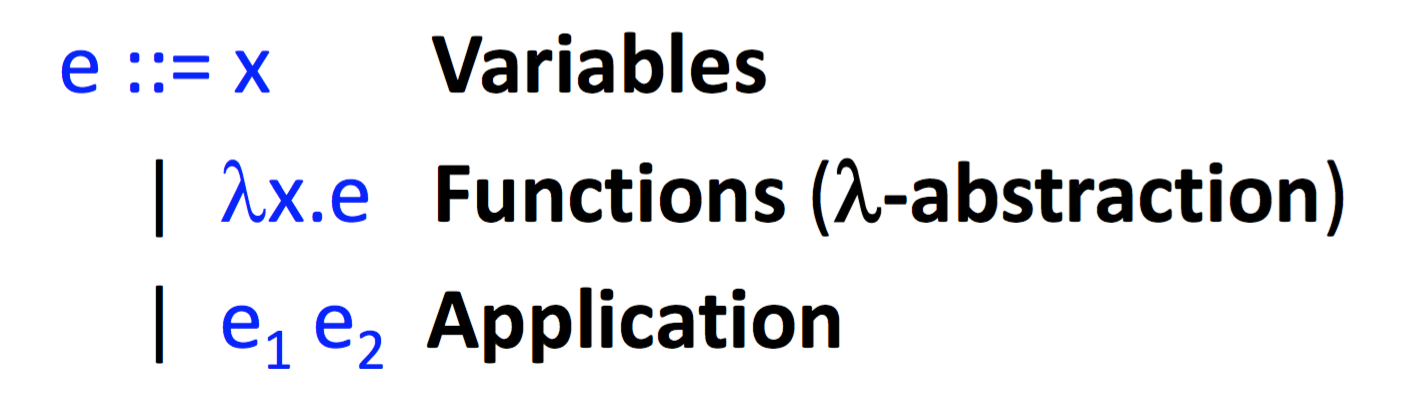

Alternatively, one could compile with and use simple lambdas and applications instead instead of the above machinery.ĬBV/CBN are concepts related to the evaluation strategy of the lambda calculus, i.e. Now, instead of storing a thunk in the environment, we store a partial application object, and then evaluate it separately at different call sites.įorce and delay are required to prevent GHC from floating out function bodies, thereby recovering sharing. When implementing call-by-value lambda-calculus in Haskell, should I force the evaluation of the arguments to a function in the object language (i.e., the call-by-value lambda-calculus) to get around the call-by-need evaluation order of the meta-language (i.e. Interpret env (App e1 e2) = delay (case force (interpret env e1) of Interpret env (Lam x e) = delay (F (\v -> force (interpret ((x, v):env) e))) Interpret env (Var x) = delay (case lookup x env of We could modify the data definition: data Value a = V a | F ((() -> Value a) -> Value a)Īnd also have the interpreter return explicit thunks: interpret :: -> Expr -> () -> Value a

F (Value a -> Value a) has Value a as argument, so we have no choice but to pass in some already interpreted value, and it'll be call-by-need under Haskell reduction behaviour.

I don't think proper call-by-name is possible with the original data definition.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed